We seem to be living through a pivotal moment, often dubbed the “Age of AI”. It feels like barely a day goes by without news of another AI breakthrough that promises to revolutionize how we live, work, and perhaps even think. This rapid advancement naturally leads to big questions: What does it mean to be human when machines become increasingly intelligent? Is intelligence really what defines us?

Intelligence Isn’t Everything

I’d argue that intelligence, while a significant human trait, isn’t the sole factor that makes us human. We are a complex mix: intelligence, yes, but also feelings, creativity, physical presence, social interactions, and so much more. While our particular brand of intelligence often differentiates us from animals, it doesn’t always make us objectively more “intelligent” in every context. A bird’s navigational intelligence, for instance, far surpasses that of most humans. (Read “If Nietzsche Were a Narwhal: What Animal Intelligence Reveals About Human Stupidity” for more examples and details) Similarly, AI is rapidly becoming more “intelligent” than humans in specific, and increasingly general, domains. It can process vast amounts of data, identify patterns we miss, and even, as we’ll see, contribute to creative and scientific endeavors. The key, then, isn’t to compete with AI on raw intelligence but to leverage its capabilities to enhance our own intelligence while actively promoting and valuing those other uniquely human aspects – our empathy, our creativity, our ability to connect and feel.

Even Human Experts Aren’t Perfect

It’s also crucial to remember that even expert humans are fallible. We often place immense trust in experts, like medical doctors, but studies show their “intelligence” or accuracy has limits. For example, one fascinating study highlighted that in straightforward diagnostic cases, doctors’ average accuracy was just over 55%, dropping to less than 6% for more complex cases (see “Physicians’ Diagnostic Accuracy, Confidence, and Resource Requests”). Perhaps even more surprisingly, their confidence level remained high regardless of the case’s difficulty (around 72% for easy cases vs. 64% for hard ones). What does this tell us? Even the best human experts make mistakes, and often, they aren’t aware when they might be wrong. So, as AI systems become increasingly capable, potentially surpassing human experts in many areas, we must remember they too will make mistakes. Blind trust is unwise, whether placed in a human or an AI.

Agency and Judgment: The Human Domain

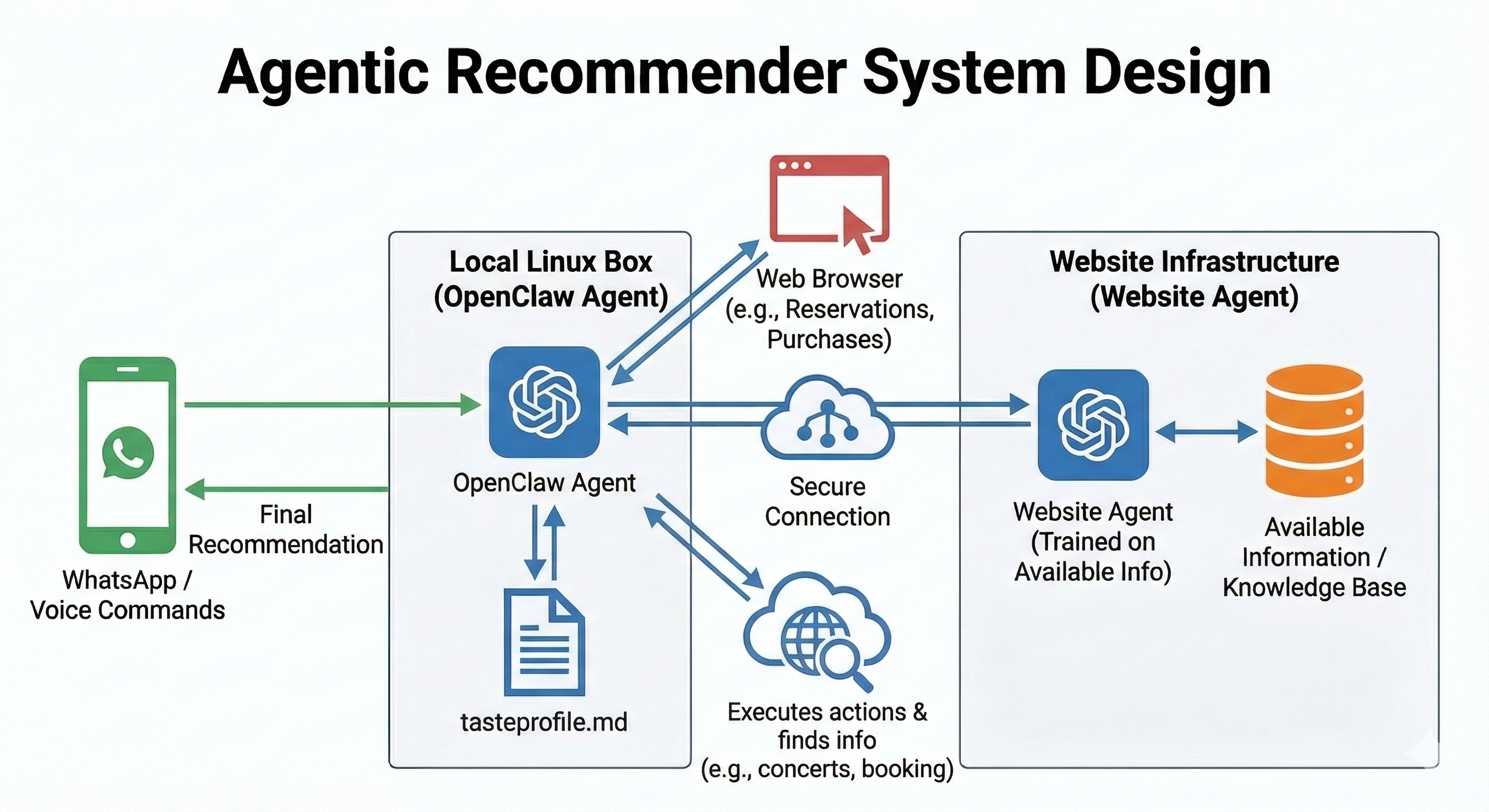

This brings us to a critical point: decisions are for humans. While AI can provide insights, predictions, and recommendations at a scale and speed we can’t match, it lacks true agency, judgment, and the capacity for responsibility. An AI can’t be held accountable for the consequences of a decision. This becomes even more critical as we increasingly hear about “AI agents” capable of performing complex tasks autonomously. While powerful, these agents still lack genuine responsibility and accountability for their actions or outcomes. We must resist the temptation to outsource our own agency to these systems; the ultimate responsibility must remain firmly in human hands. Therefore, we must resist the temptation to let AI make important decisions for us. Use AI as a powerful tool, a co-pilot, or even a knowledgeable advisor. But never blindly trust its output. Just as you might seek a second opinion from another human expert, consider getting multiple perspectives, whether from different AIs or a combination of AI and human experts. Ultimately, you must own the decision. This requires critical thinking, evaluation, and applying our uniquely human blend of intelligence, values, and intuition.

AI in Science: Disruption and Opportunity

The world of scientific discovery is already being profoundly impacted by AI. In a fascinating, and perhaps slightly unnerving, development, a scientific paper generated entirely by AI recently made its way into a top-tier peer-reviewed workshop. This experiment by Sakana AI is likely just the beginning.

This raises questions about the future of scientific innovation. Some, like Thomas Wolf in his piece “The Einstein AI Model”, argue that AI, lacking true understanding and creativity, will never be able to genuinely innovate in science. While his narrative is compelling and worth reading, I have to disagree. History, even recent AI history, suggests otherwise. Remember AlphaGo’s groundbreaking Move 37? It was a move born from patterns learned through deep reinforcement learning (RL) and self-play, a move that human Go masters considered genuinely novel and creative.

Emerging research indicates that LLMs, trained using similar RL and self-play techniques, can generate scientific hypotheses evaluated as more novel than those produced by human scientists. An AI “co-scientist” could potentially accelerate discovery far beyond what adding another human expert to the team could achieve (see this paper for details). This disruption is happening now. Scientific organizations need to take this seriously, not by fighting it, but by embracing it and figuring out how to work with AI to push the boundaries of knowledge faster and further than ever before.

Critical Thinking in the Age of AI: Are We Getting Dumber?

A common fear is that relying on AI will atrophy our own cognitive skills, particularly critical thinking. Headlines often scream that “GenAI makes you dumb”. But does it?

A recent study by Microsoft and CMU titled “The Impact of Generative AI on Critical Thinking” explored how knowledge workers perceive their critical thinking when using GenAI. It’s crucial to note the study focuses on self-reported perceptions, not objective measures of critical thinking ability.

The findings? People report using more critical thinking when they trust the AI less or trust themselves more on a task. Interestingly, the type of task didn’t seem to affect the reported level of critical thinking. The study also suggests a shift in approach: humans move from executing tasks to verifying AI-generated outputs. Perhaps most relevant to the headlines, users perceived less effort was needed to engage critical thinking when using GenAI.

Does perceiving less effort mean critical thinking is actually reduced? The study doesn’t prove that. It’s meta-ironic to see humans exhibiting poor critical thinking by misinterpreting a study about AI and critical thinking. The paper itself offers nuanced insights and suggests that AI tools should be designed to encourage human agency and critical evaluation, aligning with points I’ve made previously.

AI, Learning, and the Effort Equation

The use case of AI in learning warrants special attention. Intuitively, allowing AI to simply provide answers feels like it would bypass the necessary mental effort crucial for deep understanding and retention. Recent research confirms this intuition isn’t entirely off-base, revealing that how students use AI tools like LLMs significantly impacts learning outcomes (see “AI Meets the Classroom: When Do Large Language Models Harm Learning?”. Using AI to substitute for learning activities (e.g., getting solutions directly) allows students to cover material faster (increasing breadth), but demonstrably reduces their depth of understanding. Conversely, using AI to complement learning (e.g., asking for explanations) improves understanding without necessarily speeding up coverage.

Disturbingly, studies suggest students gravitate towards the less effective substitution method, possibly because it requires less immediate effort. This preference, combined with findings that LLMs may widen the learning gap by benefiting high-knowledge students more than those with less prior knowledge, raises concerns. Furthermore, students using LLMs tend to overestimate how much they’ve actually learned compared to their tested performance.

These findings highlight a critical challenge for education. While AI offers potential as a powerful complementary learning aid, its potential for misuse as an effort-substitute is significant. Thoughtful integration, clear guidelines encouraging complementary use, and perhaps even interface designs that discourage passive substitution are necessary to harness AI’s educational benefits without undermining the learning process itself, especially for vulnerable learners.

Embracing Our Humanity

The Age of AI isn’t about humans versus machines. It’s about defining what truly matters to us as humans and leveraging these incredible new tools to enhance those aspects. AI will undoubtedly handle more cognitive tasks, but it cannot replicate the richness of human experience – our emotions, ethical judgments, creativity born from lived experience, physical interactions, and deep social bonds. Leveraging these tools effectively requires not only individual awareness and agency but also a commitment from creators to design AI systems that encourage critical engagement, support deeper understanding, and ultimately empower, rather than merely automate, our uniquely human capabilities. Let’s use AI to free ourselves up to be more human, not less.

Comments