If you have been following my journey for a while, you’re probably aware of my pragmatic approach to AI capabilities and my skepticism towards the surrounding hype. Not too long ago, during my time at Google, I found myself sitting next to someone at an event, and the conversation inevitably turned to AI. I tend to be pretty candid about my skepticism regarding Artificial General Intelligence (AGI), so I launched right into it. I laid out my entire thesis: why the term is a misnomer, why benchmarking against human cognition is a fallacy, and why the pursuit of a monolithic “God model” is bad engineering.

He listened thoughtfully, nodding along to my points. Eventually, I paused and asked, “By the way, what do you do at Google?”

He smiled politely and introduced himself. It was Shane Legg.

For those who might not know, Shane is the Chief AGI Scientist at Google DeepMind. He is also the person who literally coined the term “AGI” nearly two decades ago. Pitching the case against AGI to the man whose life’s work is dedicated to building it is certainly one way to break the ice and Shane did take it in good humor. But despite the irony of the moment, I stand firmly by the arguments I made that day. I am not AGI-pilled.

Before I dive into the technical details, let me clarify one crucial distinction: rejecting AGI does not mean rejecting AI. I am incredibly optimistic about the future of Artificial Intelligence and its potential to fundamentally transform software, science, and society. My skepticism is directed solely at the pursuit of AGI—the obsession with building a single, monolithic, human-like “God model.” Being anti-AGI makes me a pragmatist, not a pessimist. In fact, I believe letting go of the AGI myth is the very key to building better, more capable AI systems. Here is why.

The Myth of the “G”

The foundational flaw in AGI is the “G”: General. The concept assumes that human intelligence is a universal baseline against which all synthetic intelligence should be measured. But human intelligence is not general at all; it is highly specialized.

I am not alone in this view. Yann LeCun, Meta’s Chief AI Scientist, has publicly called the concept of artificial general intelligence “complete BS,” precisely because human intelligence is inherently specialized. We are optimized for a very specific evolutionary niche. If you look at raw numerical computation, a pocket calculator from the 1980s is vastly superior to the human brain.

If you look at nature, the idea of human intellectual supremacy gets even blurrier. While our particular brand of intelligence often differentiates us from animals, it doesn’t always make us objectively more “intelligent” in every context. A bird’s navigational intelligence, for instance, far surpasses that of most humans. (Read “If Nietzsche Were a Narwhal: What Animal Intelligence Reveals About Human Stupidity” for more examples and details).

Benchmarking an AI against human capability doesn’t make it “general.” It simply makes it an artificial mimic of our specific, localized evolutionary adaptations.

Striking the Magic from Intelligence

If human cognition isn’t the gold standard, what actually is intelligence?

My former colleague Blaise Agüera y Arcas tackles this beautifully in his great book What Is Intelligence?. He strips away the mystical, anthropocentric aura we tend to wrap around the mind. Agüera y Arcas frames biological organisms essentially as compositions of functions, reducing intelligence to the elegant mechanics of prediction and computation. In his book, Aguera lists the 5 properties of intelligence, all of which point to a compound rather than monolythic nature. Intelligence is (1) predictive, (2) social, (3) multifractal, (4) divese, and (5) symbiotic. This leads to a very “non-agi” definition of intelligence as “the ability to model, predict, and influence one’s future; it can evolve in relation to other intelligences to create a larger symbiotic intelligence.” (Also kudos for that Before Sunrise reference!).

When you define intelligence by its functional reality (the ability to model the world and predict outcomes) the AGI illusion starts to fade. Building complex systems that master prediction (like predicting the next token in a sequence) doesn’t magically summon a human-like mind into the machine.

The Monolith Fallacy and the “Intelligence Gap”

This brings us to the most frustrating contradiction in modern AI research: the cognitive dissonance of the top labs. Even luminaries who recognize the flaws in human “generality” (like LeCun, or DeepMind CEO Demis Hassabis) still insist on cramming all capabilities into massive, monolithic foundation models.

Recently, Hassabis pointed to Large Language Models making mistakes in basic math as evidence of a “real intelligence gap” on the road to AGI. But this completely misses the point. LLMs are probabilistic predictors. Expecting billions of frozen neural weights to perform flawless, symbolic arithmetic is an architectural mismatch; it is like using a hammer to turn a screw. The solution to an LLM failing at math isn’t to train a larger, more expensive monolith or to expect another breakthrough. The solution is simply to give the model access to that 1980 calculator.

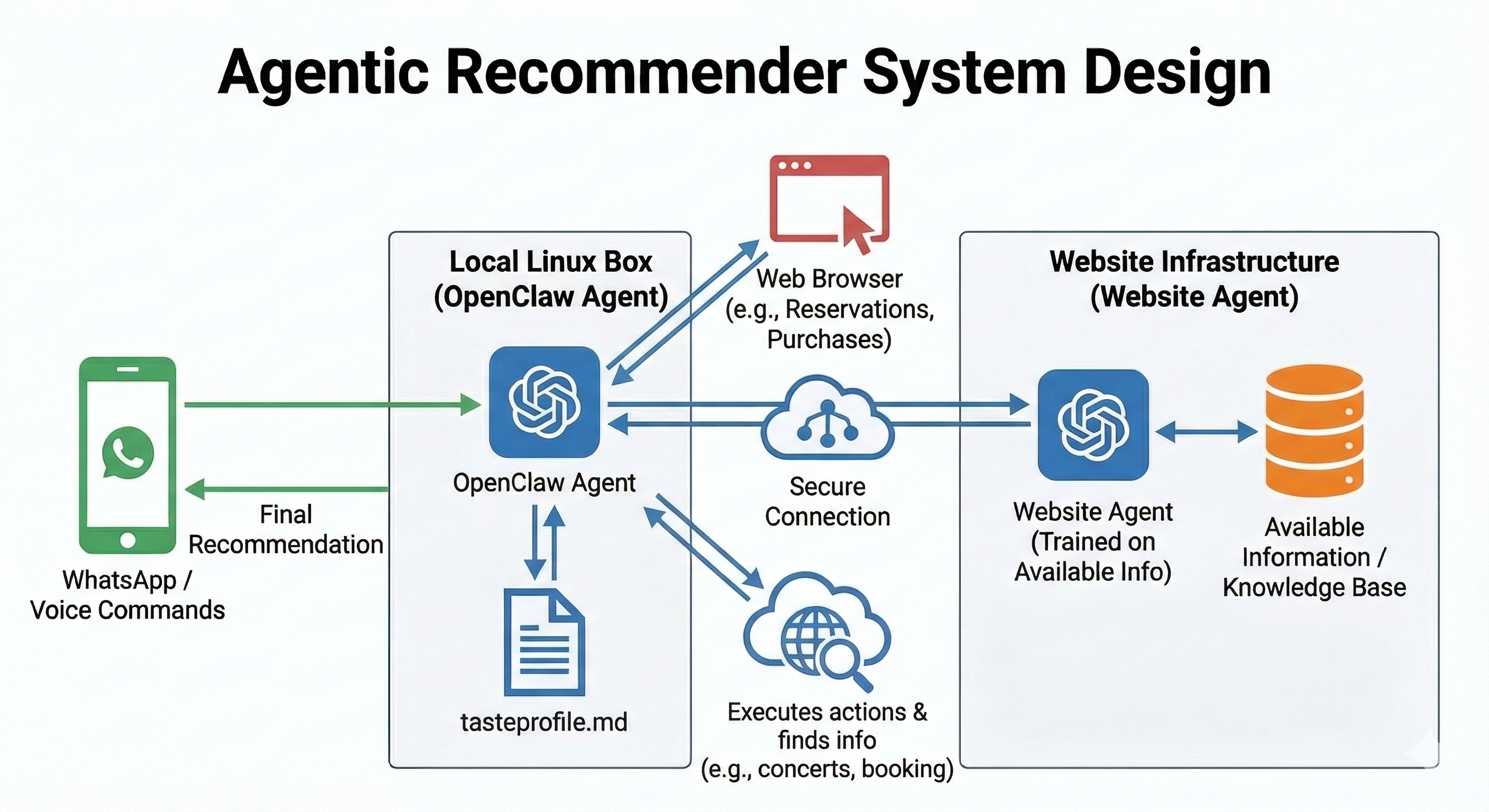

Similarly, many experts frequently point to “continuous learning” as a major hurdle separating current AI from true AGI. But again, this is a limitation of a frozen, monolithic neural network, not a limitation of AI as a system. Agentic AI solves this elegantly. Agents like OpenClaw are already demonstrating continuous learning by actively managing persistent memory and reading/writing to files. In fact, if we look outside the LLM bubble, simpler specialized AI—like massive-scale recommender systems—have been successfully using online learning approaches to continuously update and learn from user interactions for decades. We don’t need a mystical AGI breakthrough for continuous learning; we just need better system design.

The Power of Composition: Enter Compound AI Systems

This is why the current pursuit of AGI represents terrible engineering. The most robust, scalable, and safe AI architectures in production today do not rely on a single, omniscient model. They achieve broad capability through composition—what we now call Compound AI Systems.

Instead of forcing a single neural network to do everything, you orchestrate specialized agents. You use an LLM not as a universal database, but as a semantic router and reasoning engine. If the system needs factual grounding, it queries a vector database (RAG). If it needs to execute logic, it writes code and hands it to a Python interpreter. If it needs to do exact arithmetic, it executes an API call to a deterministic calculator tool.

This multi-agent paradigm is not just theoretical; it is happening right now. Recent examples like Moltbook and Kimi agent swarm have gathered far more attention, excitement, and practical traction than any of the recent monolithic model launches. When Anthropic’s CEO Dario Amodei talks about the future being a “Country of Geniuses in a Datacenter,” he is effectively describing a multi-agent reality. You do not need a central, monolithic AGI to make that possible. You simply need swarms of highly specialized agents collaborating at scale.

As I have noted before, specialized, superhuman agents are likely to be both more achievable and more beneficial in addressing specific, complex challenges in alignment with our goals (see my “Beyond Singular Intelligence: Exploring Multi-Agent Systems and Multi-LoRA in the Quest for AGI”). Much like modern, massive-scale recommender systems, intelligence at scale is a pipeline, not a monolith.

This compound approach is inherently safer. When you rely on specialized tools, you maintain control. You can monitor the API calls, isolate hallucinations, and bake strict, programmatic guardrails into the boundaries between agents. A monolithic model, by contrast, is an opaque black box where capabilities and failure modes are dangerously entangled.

The winner-takes-all hard takeoff scenario

So why are AI labs so obsessed with pursuing a single, monolithic AGI instead of embracing specialized composition? It is largely driven by the science-fiction fantasy of the “hard takeoff.” This is the belief that once a single model crosses a certain intelligence threshold, it will recursively self-improve at an explosive rate. It’s an arms race fueled by the fear that the first company to build this “God model” takes the entire global economy.

Fueling an arms race to build a single, opaque, uncontrollable system just to win a hypothetical “winner-takes-all” scenario is not a sound technological strategy. It is reckless.

Conclusion

Generality is not a magical spark waiting to be ignited inside a massive GPU cluster. Broad, robust capability is a system-level property, achieved through the careful, safe composition of specialized tools. We don’t need AGI to build highly capable systems, and we certainly shouldn’t be gambling our future on the hope that the first ones to reach the hard takeoff threshold will be whoever we consider to be “the good ones”.

Comments